Explore how deep learning neural networks revolutionize AI, enabling machines to see, speak, and think like humans.

Understanding how deep learning neural networks function can open new doors for you—whether you’re curious about technology, aiming to boost your career, or simply want to grasp the magic behind smart apps. Dive in, and you’ll discover how these networks are shaping the future, one layer at a time.

Credit: botpenguin.com

Deep Learning Basics

Deep learning is a branch of artificial intelligence that uses neural networks to learn from data. It mimics how the human brain processes information. These networks can recognize patterns and make decisions on their own. Understanding the basics helps to grasp how deep learning works and why it matters.

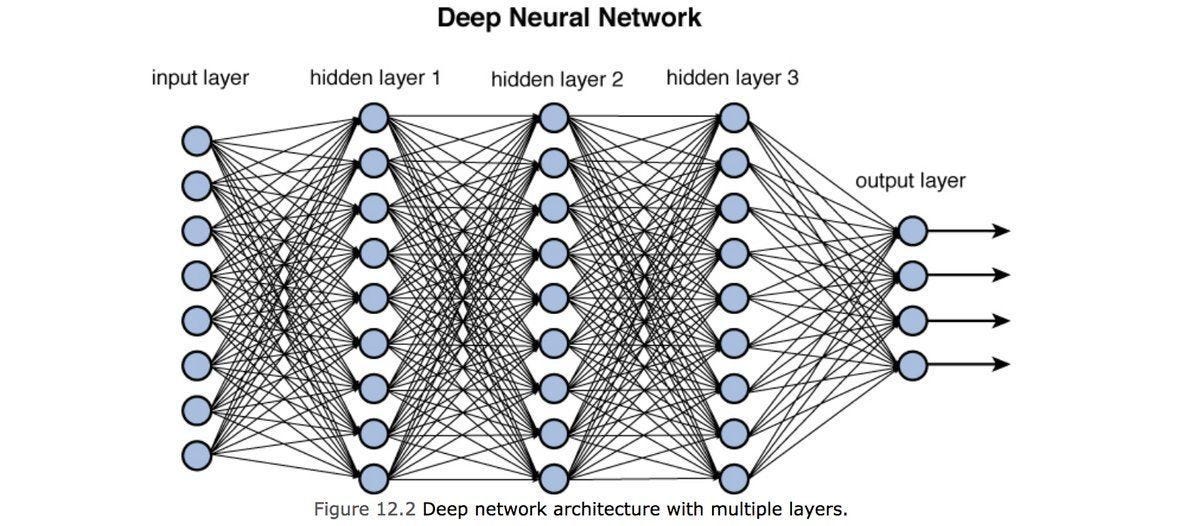

Neural Network Structure

A neural network is made up of layers of nodes, also called neurons. Each node receives input, processes it, and passes the result to the next layer. The first layer takes in raw data, and the last layer produces the output. Between them are hidden layers that transform the data step by step.

Role Of Layers And Nodes

Layers play different roles in a neural network. Input layers collect data. Hidden layers extract features and patterns. Output layers deliver the final prediction or classification. Nodes in each layer work together to analyze parts of the data. More layers allow the network to learn complex patterns.

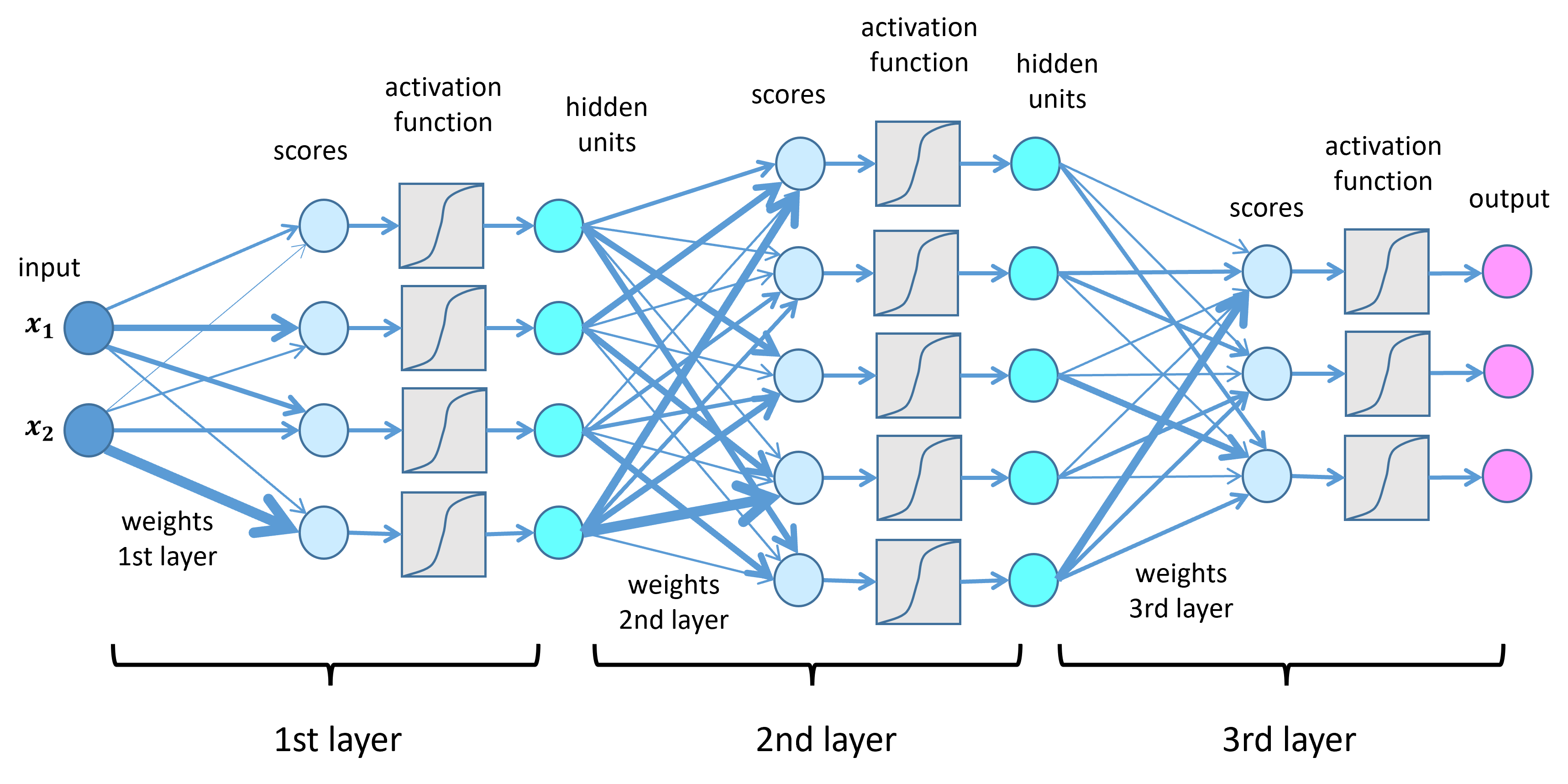

Weights And Connections

Connections between nodes have weights, which adjust as the network learns. These weights control the influence one node has on another. A higher weight means a stronger connection. The network changes weights during training to improve accuracy. This process helps the network understand data better.

Credit: www.ibm.com

How Neural Networks Learn

Neural networks learn by adjusting their internal structure through a process called training. This process allows them to improve their ability to solve problems over time. Learning happens by finding patterns in data and making small changes to the network’s connections. These changes help the network produce better results with each attempt.

Training Process

The training process involves feeding data into the neural network. The network makes predictions based on this data. Then, it compares the predictions with the correct answers. If the network’s output is wrong, it calculates an error value. This error guides the network on how to improve its predictions.

Adjusting Connections

Inside the network, many connections link neurons. Each connection has a weight, which controls the strength of the signal. During training, the network adjusts these weights. It increases or decreases them to reduce errors. This adjustment helps the network learn the right patterns.

Pattern Recognition

Neural networks excel at recognizing complex patterns. They find hidden relationships in data that humans may miss. By learning these patterns, networks can classify images, understand speech, or translate languages. This ability makes neural networks powerful tools in deep learning.

Deep Vs Traditional Machine Learning

Deep learning and traditional machine learning are two key approaches in artificial intelligence. Both aim to teach computers how to learn from data. Yet, they differ greatly in design and function. Understanding these differences helps in choosing the right method for specific tasks.

Model Complexity

Traditional machine learning models often use simple algorithms. These include decision trees, support vector machines, and linear regression. They require less computational power and fewer data. Deep learning models, on the other hand, consist of many layers of neurons. These layers process data through complex transformations. This structure allows deep learning to handle more complicated tasks.

Interpretability Challenges

Traditional models are easier to understand. Their decisions are often transparent and explainable. This makes it simple to trace how results are produced. Deep learning models act like a “black box.” They have many interconnected layers, making it hard to interpret how they reach conclusions. This can be a problem in fields needing clear explanations.

Use Cases Comparison

Traditional machine learning suits tasks with structured data. Examples include credit scoring and spam detection. Deep learning excels with unstructured data like images, audio, and text. It powers technologies such as voice assistants and image recognition. Both approaches have valuable roles depending on the problem and data type.

Key Architectures In Deep Learning

Deep learning uses different neural network architectures to solve complex problems. Each architecture suits specific tasks and data types. Understanding key architectures helps grasp how deep learning models work.

These architectures have unique designs and learning methods. They shape how machines recognize images, understand language, or predict sequences. Here are the main types of deep learning networks widely used today.

Convolutional Networks

Convolutional Neural Networks (CNNs) excel at processing images. They use layers that focus on small regions of input data. CNNs detect patterns like edges, shapes, and textures. These networks reduce data size while keeping important features. Commonly used in image recognition and video analysis.

Recurrent Networks

Recurrent Neural Networks (RNNs) handle sequential data like text or time series. They have loops that allow information to persist. This helps understand context in sentences or past events in data. Variants like LSTM and GRU improve memory and performance. Useful in language modeling, speech recognition, and forecasting.

Transformer Models

Transformers use self-attention to process data in parallel. They weigh the importance of each part of the input differently. This design captures long-range dependencies better than RNNs. Transformers power many language models today, including ChatGPT. They excel in tasks like translation, summarization, and text generation.

Transformers And Language Models

Transformers have changed how machines understand language. They power many modern language models. These models read, analyze, and generate text based on context. Their design excels at handling complex language tasks. They process information differently than older neural networks. This makes them highly effective for natural language processing.

Sequential Data Processing

Transformers handle sequential data in a unique way. They look at all words at once, not one by one. This allows them to find relationships between words far apart. They use attention mechanisms to focus on important parts of the text. This method improves understanding of sentence meaning and structure. It helps models learn context and nuance better.

Contextual Text Generation

Language models generate text based on context they learn. They predict the next word by considering all previous words. This makes their output more natural and coherent. They can write essays, answer questions, and create stories. Their ability to keep context helps maintain flow in long texts. It allows them to produce human-like language easily.

Chatgpt As A Neural Network

ChatGPT is a large neural network using Transformer design. It was trained on massive amounts of text data. This training helps it understand patterns and language rules. It generates responses by predicting the next word in a sentence. ChatGPT’s neural network architecture enables it to hold conversations. It adapts to different topics and replies naturally.

Applications Unlocking Ai Potential

Deep learning neural networks power many modern AI tools. They help computers learn complex patterns from data. This ability opens many practical uses across fields. These applications improve technology and daily life. Below are key areas where deep learning shows strong impact.

Image And Speech Recognition

Neural networks analyze images and sounds with high accuracy. They identify objects in photos quickly. Speech recognition systems convert spoken words into text. This technology helps virtual assistants understand commands. It also aids in transcription services and accessibility tools.

Natural Language Processing

Deep learning models process and understand human language. They enable chatbots to hold conversations with users. Text translation becomes faster and more accurate. Sentiment analysis helps businesses gauge customer feelings. These networks understand context and meaning in sentences.

Autonomous Systems

Self-driving cars use neural networks to interpret sensor data. They detect obstacles and make driving decisions safely. Drones navigate complex environments using deep learning. Robots perform tasks with more precision and adaptability. These systems reduce human error and increase efficiency.

Challenges In Deep Learning

Deep learning neural networks have transformed many fields, from image recognition to natural language processing. Despite their power, these networks face several challenges that affect their performance and usability. Understanding these challenges helps us appreciate the complexities involved in deploying deep learning solutions.

These challenges range from the high computational power needed to the demand for large datasets. Transparency and bias in deep learning models also raise important concerns. Each aspect plays a crucial role in shaping the future of this technology.

Computational Demands

Deep learning models require vast computational resources. Training complex networks can take days or weeks. Powerful GPUs or TPUs are often necessary to speed up this process. This need limits access for smaller organizations or individuals. Energy consumption during training also raises environmental concerns.

Data Requirements

Deep learning needs large amounts of data to perform well. More data usually means better accuracy in predictions. Gathering and labeling this data can be expensive and time-consuming. In some cases, data privacy issues limit access to necessary datasets. Poor quality data can lead to weak or biased models.

Transparency And Bias

Deep learning models act as “black boxes” with little explanation for decisions. This lack of transparency makes it hard to trust the results. Bias in training data can cause unfair or incorrect outputs. Detecting and fixing these biases is a major challenge. Ensuring fairness and accountability remains a top priority.

Future Trends In Neural Networks

The future of deep learning neural networks holds exciting possibilities. Advances aim to make networks smarter, faster, and more versatile. These developments will impact many industries and everyday technology. Understanding upcoming trends helps prepare for new AI capabilities.

Improved Architectures

Neural network designs will become more complex yet efficient. New layers and structures will better capture data patterns. Architectures like transformers will evolve to handle larger tasks. These improvements will allow networks to learn faster and with less data. The focus will shift toward creating adaptable and scalable models.

Efficient Training Methods

Training neural networks requires a lot of time and power. Future methods will reduce this demand. Techniques such as pruning and quantization will make models smaller and quicker. Transfer learning will allow networks to reuse knowledge across tasks. These steps will lower costs and energy use in AI development.

Broader Ai Integration

Neural networks will integrate more deeply into everyday systems. AI will blend with robotics, healthcare, and smart devices seamlessly. This integration will enhance automation and decision-making. Networks will support more personalized and responsive user experiences. The goal is smoother cooperation between humans and machines.

Credit: lamarr-institute.org

Read More : Deep Learning Machine Learning AI Exposed: Voice, Music & Self-Driving Magic

Frequently Asked Questions

What Is A Neural Network In Deep Learning?

A neural network in deep learning is a system of interconnected artificial neurons. It processes data through multiple layers. Each connection has weights that adjust during learning. This structure enables the network to recognize patterns and make decisions. Neural networks power many AI applications today.

Is Dl Harder Than Ml?

Deep Learning (DL) is generally harder than Machine Learning (ML) due to its complex multi-layered neural networks. DL requires more data, computational power, and expertise to design and interpret than traditional ML models.

Is Deep Learning Using Neural Networks?

Yes, deep learning uses neural networks with multiple layers. These layers process data to learn complex patterns automatically.

Is Chatgpt A Neural Network?

Yes, ChatGPT is a neural network. It uses the Transformer architecture, a deep learning model designed for language processing and generation.

Conclusion

Deep learning neural networks mimic the human brain’s structure. They learn by adjusting connections between neurons. These networks handle complex tasks like image and speech recognition. Their multiple layers help find patterns in large data sets. Deep learning continues to grow and impact many fields.

Understanding its basics opens doors to future technologies. Keep exploring to see how deep learning evolves next.